AI Homework

This article by Ben Thompson for his Stratechery letter may be of interest to subscribers. Here is a section:

The difference is that ChatGPT is not actually running python and determining the first 10 prime numbers deterministically: every answer is a probabilistic result gleaned from the corpus of Internet data that makes up GPT-3; in other words, ChatGPT comes up with its best guess as to the result in 10 seconds, and that guess is so likely to be right that it feels like it is an actual computer executing the code in question.

This raises fascinating philosophical questions about the nature of knowledge; you can also simply ask ChatGPT for the first 10 prime numbers:

Those weren’t calculated, they were simply known; they were known, though, because they were written down somewhere on the Internet. In contrast, notice how ChatGPT messes up the far simpler equation I mentioned above:

For what it’s worth, I had to work a little harder to make ChatGPT fail at math: the base GPT-3 model gets basic three digit addition wrong most of the time, while ChatGPT does much better. Still, this obviously isn’t a calculator: it’s a pattern matcher — and sometimes the pattern gets screwy. The skill here is in catching it when it gets it wrong, whether that be with basic math or with basic political theory.

The probabilistic versus deterministic issue boils down to the problem all models face; quality of data. The best way for a chatbot to function is to only use a confined sample of reliable answers. In other words for an answer that is sure to be correct, the dataset has to be filled with deterministic infallible answers.

Without absolute certainty in the quality of the data, there is a significant margin for error in the rightness of the answer. Chatbots are increasingly capable of writing well, but that is defined by adherence to deterministic grammatical rules and comparison with real world action, so there is a hard point of comparison as to what is in fact the correct word order.

Chatbots will be easily able to take phone calls, provide answers from well defined parameters and even search the internet for the highest ranked answer to a question. That does not mean the answer will be correct however.

I do wonder where the confluence is going to be between quantum computers and artificial intelligence. quantum computers deploy possible world theory while AI relies on the wisdom of crowds. Both are very different big data solutions using a line of best fit to come up with the most probable answer.

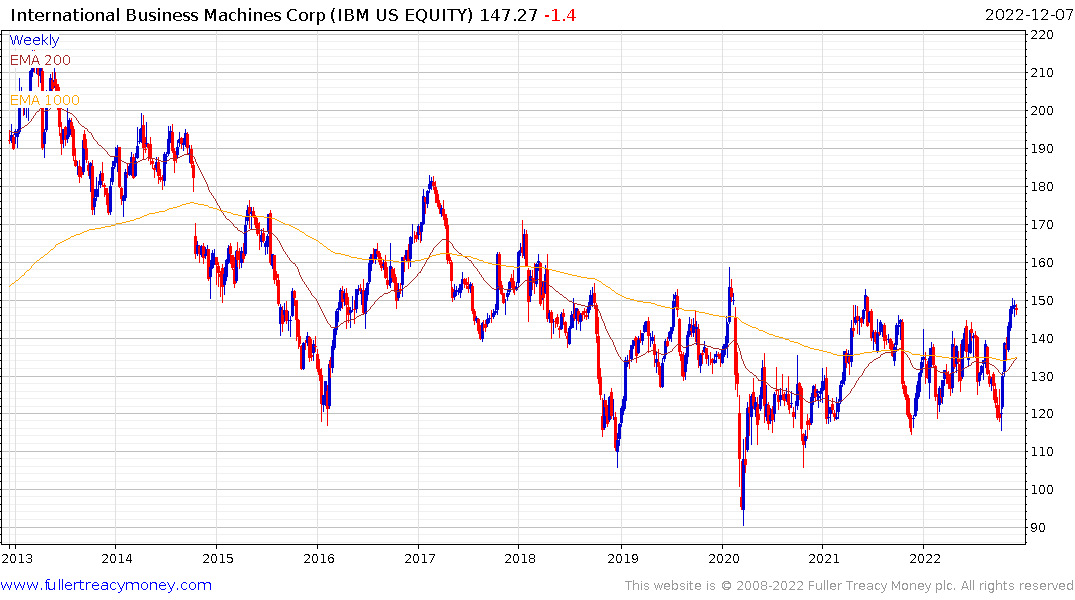

OpenAI now has to come up with a pricing model for this software and will need significant resources for customer support when answered questions are incorrect. Meanwhile IBM is bucking the trend in the wider technology sector as it tests the upper side of a four-year base formation.