It Takes Just 3 Stickers to Make a Tesla Drive Into Oncoming Traffic

This article by Ryan Whitwam for ExtremeTech may be of interest to subscribers. Here is a section:

Tesla’s Autopilot is a level two system that’s leaning into level three, but it might not have the necessary hardware to make it work. These vehicles use cameras, radar, and ultrasonic sensors to detect lanes and nearby vehicles. The Keen Security researchers reverse-engineered the software Tesla uses to see how easy it would be to fool those sensors. They didn’t need to make any changes to the car’s software — this is not a hack. They simply used three small reflective stickers on the roadway to trick Autopilot into thinking the lane had merged when it hadn’t.

According to the report, Tesla uses a feature called “detect_and_track” to identify lane markers. It uses several factors to avoid incorrect decisions like road shoulder location, lane history, and the distance to various objects. However, the reflective stickers appear to the car like lane markers, directing it to merge. These stickers are almost invisible to drivers, and it would be trivially easy to place them on roadways.

Tesla’s Autopilot system does include emergency braking. So, it’s possible the car could stop itself in the event it swerved into oncoming traffic. However, there’s no guarantee the other cars would stop. Tesla says it is evaluating the report but notes that drivers are supposed to keep their hands on the wheel while Autopilot is engaged.

Here is a link to the original research conducted by Keen Security. Teaching a computer to see, in the way we understand that statement as humans, is an enormously difficult task which is why they route to success has been through teaching computers to react to cues. By fiddling with the cues, the computer can be fooled. That suggests a major rewrite of code for autonomous systems but if the clear fix is to simply tell the computer to take more notice of its environment and the direction of the cars travelling on its periphery.

There is a “you need to break some eggs to make an omelette” attitude to the evolution of self-driving vehicles, with Tesla’s default defense being that you really should hold on to the wheel. Meanwhile, there have been videos circulating on YouTube where Tesla drivers have clearly been asleep while the car drives down the freeway at 70 miles an hour. That is the kind of thing that gives people confidence in autonomous vehicles.

Meanwhile, while in Phoenix earlier this year, I was surprised by the almost complete absence of awareness among people that Waymo is testing autonomous vehicles in the suburbs. That tells me while there are breathless articles being written about public disaffection with the prospect driverless vehicles, there is not much evidence of it on the ground.

I believe autonomous vehicles are inevitable because the value proposition is so large for the companies concerned. Quite whether that takes 3, 5 or 10 years is the main question.

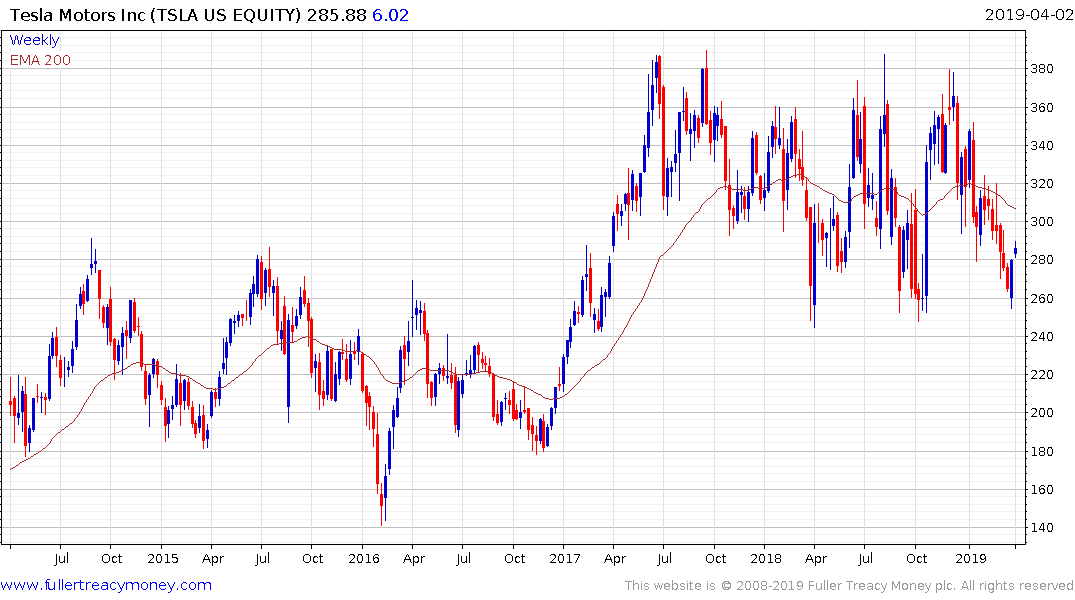

Tesla continues to firm from the lower side of its more than 2-year range.

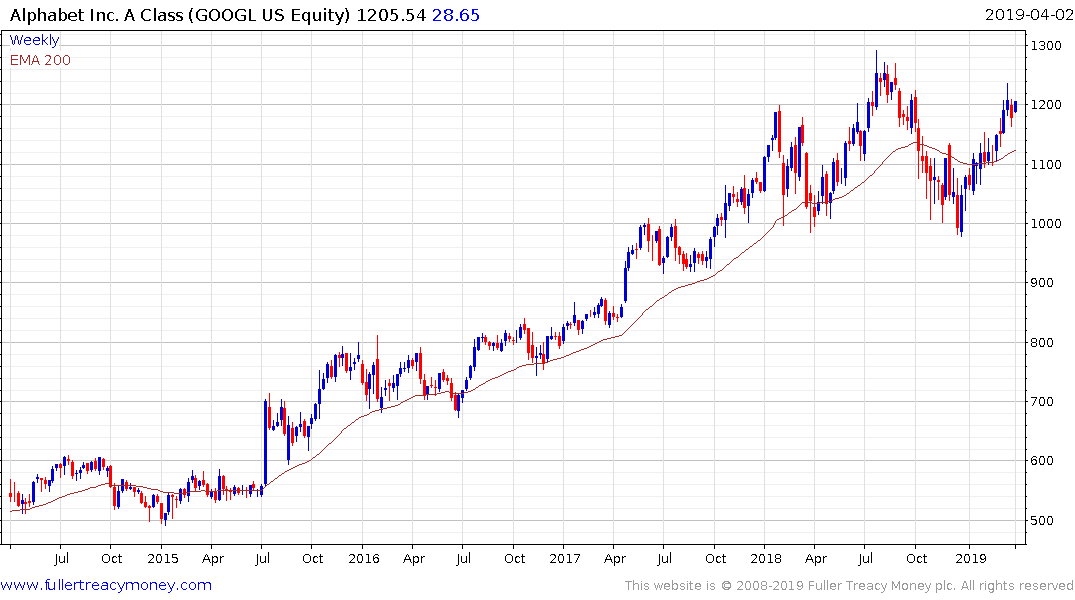

Alphabet has held a sequence of higher reaction lows since the December nadir and is back in the region of its peaks.